[ad_1]

Within the wake of ChatGPT, each firm is attempting to determine its AI technique, work that rapidly raises the query: What about safety?

Some could really feel overwhelmed on the prospect of securing new expertise. The excellent news is insurance policies and practices in place immediately present glorious beginning factors.

Certainly, the way in which ahead lies in extending the prevailing foundations of enterprise and cloud safety. It’s a journey that may be summarized in six steps:

- Increase evaluation of the threats

- Broaden response mechanisms

- Safe the information provide chain

- Use AI to scale efforts

- Be clear

- Create steady enhancements

Take within the Expanded Horizon

Step one is to get accustomed to the brand new panorama.

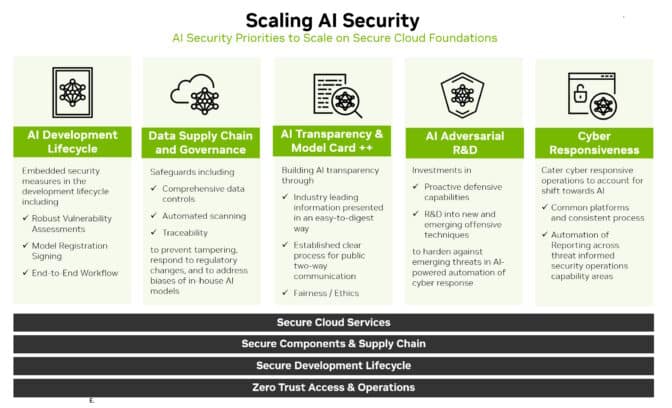

Safety now must cowl the AI growth lifecycle. This contains new assault surfaces like coaching information, fashions and the folks and processes utilizing them.

Extrapolate from the recognized varieties of threats to establish and anticipate rising ones. As an example, an attacker would possibly attempt to alter the habits of an AI mannequin by accessing information whereas it’s coaching the mannequin on a cloud service.

The safety researchers and crimson groups who probed for vulnerabilities previously will probably be nice assets once more. They’ll want entry to AI methods and information to establish and act on new threats in addition to assist constructing stable working relationships with information science employees.

Broaden Defenses

As soon as an image of the threats is evident, outline methods to defend in opposition to them.

Monitor AI mannequin efficiency carefully. Assume it should drift, opening new assault surfaces, simply as it may be assumed that conventional safety defenses will probably be breached.

Additionally construct on the PSIRT (product safety incident response crew) practices that ought to already be in place.

For instance, NVIDIA launched product safety insurance policies that embody its AI portfolio. A number of organizations — together with the Open Worldwide Utility Safety Undertaking — have launched AI-tailored implementations of key safety parts such because the widespread vulnerability enumeration technique used to establish conventional IT threats.

Adapt and apply to AI fashions and workflows conventional defenses like:

- Retaining community management and information planes separate

- Eradicating any unsafe or private figuring out information

- Utilizing zero-trust safety and authentication

- Defining applicable occasion logs, alerts and exams

- Setting stream controls the place applicable

Prolong Current Safeguards

Defend the datasets used to coach AI fashions. They’re worthwhile and susceptible.

As soon as once more, enterprises can leverage present practices. Create safe information provide chains, much like these created to safe channels for software program. It’s essential to ascertain entry management for coaching information, identical to different inside information is secured.

Some gaps could have to be crammed. At present, safety specialists know methods to use hash information of purposes to make sure nobody has altered their code. That course of could also be difficult to scale for petabyte-sized datasets used for AI coaching.

The excellent news is researchers see the necessity, and so they’re engaged on instruments to handle it.

Scale Safety With AI

AI will not be solely a brand new assault space to defend, it’s additionally a brand new and highly effective safety software.

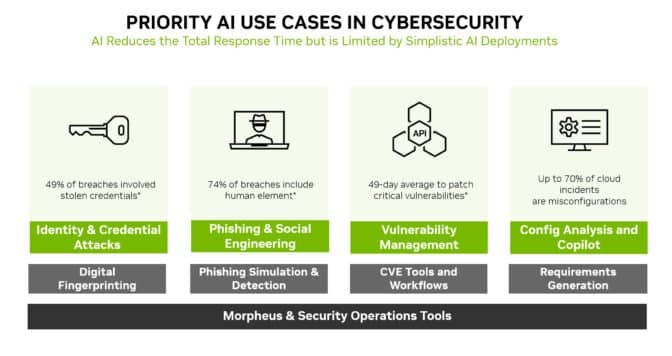

Machine studying fashions can detect refined modifications no human can see in mountains of community visitors. That makes AI a super expertise to stop most of the most generally used assaults, like id theft, phishing, malware and ransomware.

NVIDIA Morpheus, a cybersecurity framework, can construct AI purposes that create, learn and replace digital fingerprints that scan for a lot of sorts of threats. As well as, generative AI and Morpheus can allow new methods to detect spear phishing makes an attempt.

Safety Loves Readability

Transparency is a key part of any safety technique. Let prospects find out about any new AI safety insurance policies and practices which have been put in place.

For instance, NVIDIA publishes particulars concerning the AI fashions in NGC, its hub for accelerated software program. Referred to as mannequin playing cards, they act like truth-in-lending statements, describing AIs, the information they have been skilled on and any constraints for his or her use.

NVIDIA makes use of an expanded set of fields in its mannequin playing cards, so customers are clear concerning the historical past and limits of a neural community earlier than placing it into manufacturing. That helps advance safety, set up belief and guarantee fashions are sturdy.

Outline Journeys, Not Locations

These six steps are simply the beginning of a journey. Processes and insurance policies like these must evolve.

The rising follow of confidential computing, for example, is extending safety throughout cloud providers the place AI fashions are sometimes skilled and run in manufacturing.

The trade is already starting to see primary variations of code scanners for AI fashions. They’re an indication of what’s to return. Groups must regulate the horizon for greatest practices and instruments as they arrive.

Alongside the way in which, the group must share what it learns. A wonderful instance of that occurred on the current Generative Pink Staff Problem.

Ultimately, it’s about making a collective protection. We’re all making this journey to AI safety collectively, one step at a time.

[ad_2]